Why shadow AI is your biggest compliance risk—and how to deploy AI that actually survives an inspection

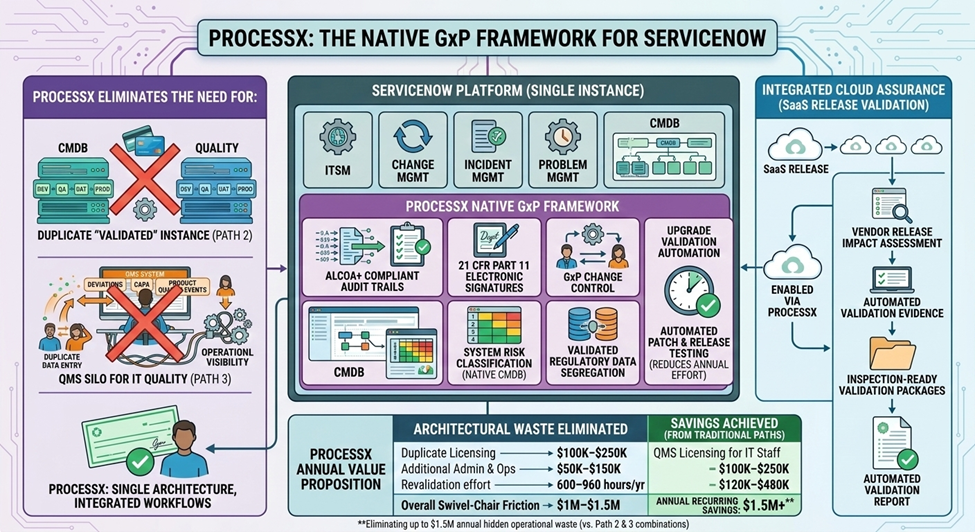

In Part 1, we addressed the $1.5M architectural problem of IT Quality in the wrong systems. In Part 2, we solved the SaaS release validation burden with Cloud Assurance.

Now we face a third challenge—one that’s emerging faster than most organizations can respond to:

How do you deploy AI in regulated environments without creating your next audit finding?

Because here’s what’s actually happening in life sciences companies right now:

- An intern presents a slide deck “Generated by Claude (personal account)” containing 10 years of proprietary R&D data

- A validation specialist uses ChatGPT to draft an impact assessment—with no record of the prompt, the output, or who reviewed it

- A QA lead pastes patient-adjacent data into a personal AI account to “get help” writing a deviation report

This isn’t hypothetical. We’re seeing it across our client base. And when the FDA asks “How do you govern AI-generated content in your quality system?”—most organizations don’t have a defensible answer.

The Shadow AI Problem: What Regulators Will Find

Let’s be direct about the risk.

Shadow AI is any use of artificial intelligence tools outside your organization’s approved, controlled environment. This includes:

- Personal ChatGPT, Claude, Gemini, or Copilot accounts

- Browser-based AI tools without SSO integration

- AI features embedded in consumer applications

- Any AI where prompts and outputs aren’t captured in your system of record

The problem isn’t that people are using AI. The problem is that there’s no audit trail. When content is generated outside controlled systems, you cannot demonstrate:

- What was drafted — The actual AI-generated output

- What prompt created it — The context and instructions given

- Who reviewed it — Identity and timestamp of human review

- What changes were made — Delta between AI draft and final content

- Who approved it — Part 11 compliant electronic signature

From a regulatory perspective, content generated via shadow AI is uncontrolled content in your quality system. And uncontrolled content is exactly what investigators look for.

Real Scenarios We’re Seeing in 2026

These aren’t edge cases. They’re patterns emerging across the industry:

Scenario 1: The Helpful Intern

A summer intern uses their personal Claude account to summarize your company’s oncology pipeline—10 years of R&D strategy, competitive positioning, and clinical trial data. They present the summary at a staff meeting. Slide 2 says “Generated by Claude.” The data now exists on Anthropic’s servers. There’s no record of what was shared. And your IP protection just evaporated.

Scenario 2: The Friday Afternoon Shortcut

A validation specialist needs to draft an impact assessment for a Veeva release. It’s 4:47 PM on Friday. They paste the release notes into ChatGPT (personal account), get a draft, copy it into Word, clean it up, and submit it through your change control system. The change record shows the assessment was “authored” by the specialist. But who really wrote it? What was the prompt? Was the output reviewed for accuracy? There’s no way to know.

Scenario 3: The CRO Breach Response

Your critical CRO is flagged for a cyber incident. Legal needs a response brief. Someone pastes the vendor’s security questionnaire responses—including details about your data handling arrangements—into a personal AI to “help organize the analysis.” That data is now outside your control. And you just created a secondary exposure while responding to the first one.

In each case, the intent was good. People are trying to work faster and smarter. But good intent doesn’t create audit trails.

What Regulators Actually Expect for AI Governance

The regulatory landscape for AI in life sciences is evolving rapidly, but the core expectations are consistent with existing frameworks:

FDA Perspective

FDA has been clear that AI-generated content in quality systems must meet the same standards as human-generated content:

- 21 CFR Part 11 requirements for electronic records and signatures apply regardless of how content is created

- Data integrity principles (ALCOA+) require that records be Attributable—meaning you must know who created them and how

- Computer system validation principles apply to AI tools used in regulated processes

FDA’s 2023 guidance on AI/ML in drug development emphasizes transparency, reproducibility, and human oversight—principles that shadow AI fundamentally violates.

EU/EMA Perspective

The EU AI Act creates additional obligations for AI systems used in regulated industries:

- High-risk AI systems require conformity assessments and ongoing monitoring

- Transparency requirements mandate disclosure of AI-generated content

- Human oversight provisions require meaningful review of AI outputs

Annex 11 already requires that computerized systems used in GxP environments be validated and controlled—AI tools are no exception.

The Bottom Line

Regulators don’t expect you to avoid AI. They expect you to govern it with the same rigor you apply to any other tool in your quality system.

Governed AI vs. Shadow AI: The Architecture That Matters

The solution isn’t to ban AI—that’s both impractical and counterproductive. The solution is to provide governed AI that’s actually easier to use than shadow alternatives.

| Dimension | Shadow AI | Governed AI |

|---|---|---|

| Access Control | Personal accounts, no SSO | Enterprise SSO, role-based access |

| Data Protection | Data leaves organization | Data stays in controlled environment |

| Prompt Logging | No record of inputs | All prompts captured in audit trail |

| Output Tracking | No record of AI responses | All outputs versioned and stored |

| Human Review | Optional, undocumented | Mandatory, with identity capture |

| Approval Workflow | None | Part 11 compliant e-signatures |

| Audit Trail | Does not exist | Immutable, inspection-ready |

| Training Record | Non | Acceptable use acknowledgment |

| Inspection Response | “We don’t know” | Complete evidence package |

The goal is to make governed AI the path of least resistance. When the approved tool is as fast and capable as the shadow alternative—and doesn’t require employees to risk their careers—adoption follows naturally.

How ProcessX Enables Governed AI in GxP Environments

ProcessX was designed from the ground up to support AI-assisted workflows while maintaining regulatory compliance. Here’s how it works:

1. AI Drafting with Human Approval

AI can draft content—impact assessments, deviation descriptions, CAPA plans, risk analyses—but every draft requires human review and approval before it enters the controlled record.

- AI-generated content is labeled as AI-drafted in the system

- The original prompt is captured and linked to the output

- Human reviewers see both the AI draft and the prompt that created it

- Changes between AI draft and final version are tracked

- Part 11 electronic signature captures approval with identity and timestamp

2. Immutable Audit Trail

Every AI interaction is captured in ProcessX’s ALCOA+ compliant audit trail:

- Attributable — Who initiated the AI request, who reviewed, who approved

- Legible — Clear record of prompt, output, and final content

- Contemporaneous — Timestamps for every action

- Original — First-generation record, not copied from external systems

- Accurate — Version control shows exactly what changed

3. Role-Based Access Controls

Not everyone needs AI access for every workflow. ProcessX allows granular control:

- Define which roles can use AI drafting

- Specify which document types allow AI assistance

- Set review requirements based on content sensitivity

- Enforce training completion before AI access is granted

4. Integration with Enterprise AI

ProcessX integrates with enterprise AI platforms (like enterprise ChatGPT or Claude for Enterprise) to ensure:

- Data never leaves your controlled environment

- SSO authentication ties AI use to corporate identity

- DLP controls prevent sensitive data exfiltration

- All interactions flow through your approved tenant

Implementing AI Governance: A Practical Framework

Based on our work with life sciences organizations, here’s a practical framework for implementing governed AI:

Phase 1: Policy Foundation (2–4 weeks)

- Define acceptable use — What AI tools are approved? For what purposes?

- Classify data sensitivity — What can and cannot be shared with AI systems?

- Establish review requirements — Who must review AI-generated content before use?

- Create training materials — Ensure everyone understands the rules

Phase 2: Technical Controls (4–8 weeks)

- Deploy enterprise AI — Provision approved AI tenant with SSO

- Implement DLP — Block personal AI tools on corporate network/devices

- Configure ProcessX — Enable AI drafting with appropriate controls

- Establish monitoring — Track AI usage patterns and policy compliance

Phase 3: Operational Integration (Ongoing)

- Train users — Roll out acceptable use training with acknowledgment

- Monitor adoption — Track governed AI usage vs. shadow AI attempts

- Refine policies — Adjust based on real-world usage patterns

- Prepare for inspection — Document your AI governance approach

What Good Looks Like: The Inspection-Ready AI Posture

When an FDA investigator asks about your AI governance, here’s what you should be able to demonstrate:

Policy Level

- Written AI acceptable use policy

- Data classification scheme for AI interactions

- Training records showing employee acknowledgment

- Periodic review process for AI policy effectiveness

Technical Level

- Approved AI tools with enterprise controls

- DLP/CASB blocking of unauthorized AI tools

- SSO integration tying AI use to corporate identity

- Audit logs of all AI interactions

Operational Level

- AI-drafted content clearly labeled in quality records

- Prompts linked to outputs in audit trail

- Human review documented with Part 11 signatures

- Changes between AI draft and final content tracked

The goal isn’t to prove you don’t use AI. It’s to prove you govern AI with the same rigor as any other tool in your quality system.

KPIs for AI Governance Success

How do you know your AI governance program is working? Track these metrics:

| KPI | Target | Why It Matters |

|---|---|---|

| Shadow AI attempts blocked | >95% | Technical controls are effective |

| Users completing AI training | 100% within 30 days | Policy awareness is universal |

| AI drafts with proper review | 100% | Human oversight is enforced |

| Time from AI draft to approval | <4 hours avg | Governance isn’t a bottleneck |

| AI-assisted productivity gain | >30% cycle time reduction | AI is creating value |

| Inspection pack generation time | <1 hour | Evidence is readily available |

Common Mistakes to Avoid

Based on what we’re seeing across the industry, here are the mistakes that create the most risk:

Mistake 1: The AI Policy PDF Nobody Reads

Publishing a policy document isn’t governance. If employees can still access personal AI tools and there’s no technical enforcement, the policy is theater. Technical controls must match policy intent.

Mistake 2: Banning AI Entirely

Prohibition doesn’t work. If people can’t use approved AI, they’ll use unapproved AI—they just won’t tell you about it. Make the approved path easier than the shadow path.

Mistake 3: “Final” in the Filename

If your quality records include files named “FINAL_v3_reallyFinal.docx” that were drafted by AI with no audit trail, you don’t have a system—you have a liability. AI-generated content must flow through controlled workflows.

Mistake 4: Assuming Enterprise ChatGPT Is Enough

Enterprise AI platforms provide data protection, but they don’t automatically create GxP-compliant audit trails. You still need workflow controls that capture prompts, outputs, reviews, and approvals in your system of record.

Mistake 5: Waiting for Regulatory Clarity

FDA and EMA are issuing guidance, but waiting for perfect clarity is a risk in itself. The organizations that figure out AI governance now will have a significant advantage. The time to act is before the inspection, not during it.

Frequently Asked Questions

What is shadow AI and why is it a compliance risk?

Shadow AI is any use of AI tools outside your organization’s approved, controlled environment—including personal ChatGPT, Claude, or Copilot accounts. It’s a compliance risk because there’s no audit trail of what was generated, who reviewed it, or how it was approved. This violates data integrity principles (ALCOA+) and Part 11 requirements for electronic records.

Does FDA require validation of AI tools used in GxP processes?

Yes. AI tools used in regulated processes must meet the same computer system validation requirements as any other software. This includes documented intended use, risk assessment, appropriate testing, and ongoing change control. ProcessX provides a pre-validated framework for AI-assisted workflows.

How do you create an audit trail for AI-generated content?

An AI audit trail must capture: (1) the prompt/input that initiated the AI request, (2) the AI-generated output, (3) who reviewed the output, (4) what changes were made, and (5) who approved the final content with a Part 11 compliant electronic signature. ProcessX captures all of this automatically.

Can AI draft content for quality records like deviations and CAPAs?

Yes, with proper controls. AI can assist with drafting, but the content must be clearly labeled as AI-generated, reviewed by qualified personnel, and approved through controlled workflows. The key is ensuring human oversight and maintaining a complete audit trail.

What’s the difference between governed AI and enterprise AI?

Enterprise AI (like ChatGPT Enterprise) provides data protection and SSO integration. Governed AI adds GxP-specific controls: prompt logging, output versioning, mandatory human review, Part 11 electronic signatures, and integration with your quality system of record. Enterprise AI is necessary but not sufficient for regulated environments.

How long does it take to implement AI governance?

A practical implementation typically takes 8–12 weeks: 2–4 weeks for policy foundation, 4–8 weeks for technical controls and ProcessX configuration, and ongoing operational integration. Organizations can begin using governed AI workflows during the implementation phase.

The Organizations That Figure This Out First Will Win

AI isn’t going away. The productivity gains are too significant to ignore, and your competitors are already using it—whether they admit it or not.

The question isn’t whether to adopt AI. It’s whether you’ll adopt it in a way that:

- Creates audit trails that survive inspections

- Protects your data from unauthorized exposure

- Maintains human oversight where it matters

- Actually accelerates your teams instead of creating new bottlenecks

The organizations that solve AI governance now will compound their advantage as capabilities improve. The ones that don’t will face a choice: continue operating blind to AI use in their quality systems, or scramble to retrofit controls after an inspection finding.

ProcessX was built for this moment—not just to solve the IT Quality architecture problem (Part 1) or the SaaS validation burden (Part 2), but to provide the governance framework that makes AI actually usable in regulated environments.

Because compliance shouldn’t be a reason to avoid innovation. It should be the foundation that makes innovation sustainable.

Ready to Govern AI in Your Quality System?

If you’re navigating AI governance in a regulated environment, we’d welcome the conversation. USDM has helped dozens of life sciences organizations implement governed AI workflows that accelerate productivity while maintaining inspection readiness.

Explore the full ProcessX series:

- Part 1: The $1.5M IT Quality Problem

- Part 2: Cloud Assurance

- Part 3: Agentic AI Governance — This article

Related Resources

About the Author

Vega Finucan is a Co-Founder at USDM Life Sciences, where she focuses on building AI-enabled workflow solutions for regulated environments. This three-part series reflects patterns observed across hundreds of pharmaceutical and biotech organizations—and the persistent belief that compliance should enable innovation, not obstruct it.